In our recent insight briefing, we explored key findings from ‘Teaching with GenAI,’ an independent report commissioned by Avallain and produced by Oriel Square Limited. Central to our discussion was the question: How is GenAI shaping the future of language education?

Effective GenAI in Language Education: A Reflection on Key Insights

St. Gallen, February 27, 2025 – On February 19th, Avallain hosted an online insight briefing, ‘Effective GenAI in Language Education.’ The session explored the findings of ‘Teaching with GenAI,’ an independent report commissioned by Avallain and produced by Oriel Square Limited. The discussion encouraged participants to consider the evolving role of Generative AI (GenAI) in education—its advantages, risks and ethical implications, with a particular focus on Language Teaching.

The Reality of AI Tools in Language Education

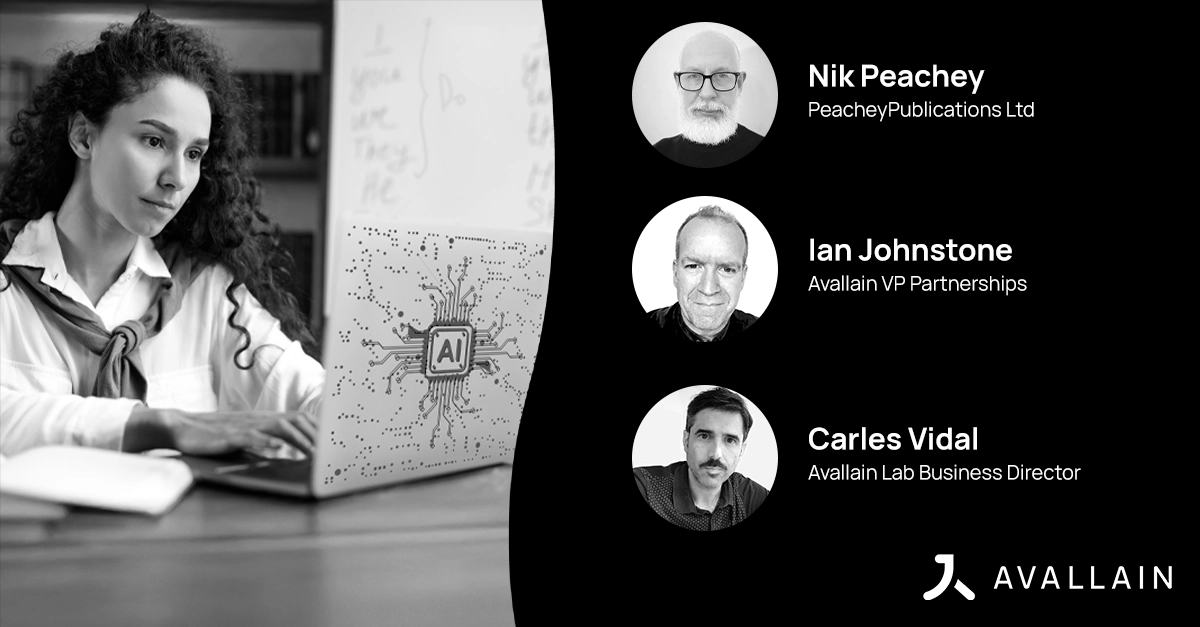

Moderated by Giada Brisotto, Marketing Project Manager at Avallain, the panel featured:

- Nik Peachey, educator, author and edtech consultant.

- Carles Vidal, Avallain Lab Business Director.

- Ian Johnstone, Avallain VP Partnerships.

Nik Peachey noted the rapid proliferation of AI tools, describing the current moment as the ‘Wild West’ in which new tools emerge almost daily. ‘In the time we’ve been in this webinar, ten new AI-powered language learning tools have probably been launched.’ He considers that, while enthusiasm is high and GenAI tools are increasingly accurate now in terms of language levelling, teachers often lack the resources to assess which tools truly enhance learning.

Carles Vidal highlighted the fact that while AI has the potential to empower teachers, the absence of proper AI training for them often leaves them experimenting in isolation. ‘Educators need to receive AI training to critically assess the trustworthiness of the GenAI tools they use in the classroom.’

The Challenge of Effective AI Integration

The discussion underscored the importance of integrating AI as a support tool rather than a replacement for pedagogical expertise. Ian Johnstone pointed out that while tools such as TeacherMatic allow educators to generate tailored lesson plans, worksheets and discussions efficiently, the quality of AI-generated content still requires human oversight. ‘Creating prompts that output a consistent, well-levelled, targeted response requires experimentation. That’s why we need tool sets that sit on top of AI models and help teachers find exactly what they need with consistency and high quality.’

Nik Peachey reinforced this, stating that the role of AI should be collaborative rather than authoritative. He described a classroom exercise where students co-write stories with AI, taking turns to contribute paragraphs. ‘It’s about guiding students through the creative process, not letting AI do the thinking for them’. For Peachey, this approach fosters deeper engagement and encourages students to develop critical thinking skills.

Ethical Considerations and the Need for AI Literacy

The ethical implications of AI in education were a major focus of the discussion. The independent report commissioned by Avallain found that only 38% of UK educators feel confident using AI in the classroom, despite an increasing familiarity with AI concepts.

‘There’s a lot of concern around AI bias’, Peachey noted. ‘Many teachers are asking, “How do I know if this tool is truly neutral?”’ He called for greater transparency from AI providers, stressing that education should drive AI development, not the other way around.

Johnstone advocated for rigorous pilot testing of AI tools such as TeacherMatic, ‘If we don’t test AI tools properly in real classrooms, we risk reinforcing existing inequalities rather than solving them. Avallain’s approach involves ongoing collaboration with institutions to ensure AI-generated materials align with educational standards.’

AI as a Teacher’s Tool, Not a Replacement

The panel unanimously agreed that a common concern among educators is whether AI will replace teachers. However, they believe that while AI can assist in lesson planning and material generation, it cannot replicate the human elements of teaching—motivation, encouragement and personalised guidance.

‘An AI can tell a student “Well done”, but does the student truly believe it?’ Peachey asked. ‘A teacher’s encouragement carries a sincerity that AI can’t replicate.’ Johnstone added that AI should be viewed as a co-pilot, allowing teachers to focus on student engagement and deeper learning.

Summarising the Key Takeaways

The webinar reinforced several noteworthy conclusions:

- AI tools are evolving rapidly, but their effectiveness depends on a careful and structured approach.

- Teachers need guidance and training to navigate the AI landscape effectively.

- Ethical concerns such as bias and data security must be addressed to build trust in AI adoption.

- AI is a support tool, not a substitute for human interaction and teaching expertise.

- Education professionals must play an active role in shaping AI’s role to ensure it aligns with pedagogical values.

Rather than fearing AI, educators should engage with it critically. By shaping its use with integrity and curiosity, teachers can harness the potential of AI while safeguarding the human elements of education that make learning meaningful.

To learn more about ‘Teaching with GenAI’ and how AI is transforming language education, click here.

About Avallain

At Avallain, we are on a mission to reshape the future of education through technology. We create customisable digital education solutions that empower educators and engage learners around the world. With a focus on accessibility and user-centred design, powered by AI and cutting-edge technology, we strive to make education engaging, effective and inclusive.

Find out more at avallain.com

About TeacherMatic

TeacherMatic, a part of the Avallain Group since 2024, is a ready-to-go AI toolkit for teachers that saves hours of lesson preparation by using scores of AI generators to create flexible lesson plans, worksheets, quizzes and more.

Find out more at teachermatic.com

_

Contact:

Daniel Seuling

VP Client Relations & Marketing